[Auto]Stitching Photo Mosaics

CS 194-26 Project #7A · Owen Jow · November 2016

[0] Setting the Scene

For this project, we produce seamlessly stitched mosaics by shooting overlapped photographs with an identical center of projection, computing projective transformations between those photographs, warping them toward whichever is selected as a base image, and finally blending all of the processed photographs into one big panorama.

To begin, we shoot pictures that are related by a projective transformation (by taking pictures from the same point of view but with different view directions). We are also careful to ensure that consecutive photographs have some common content, because in order to recover the transform between two images we have to select corresponding points in the images' overlapping scenes.

[1] Solving for the Homography Matrix

In order to identify the transformation between any two of our images, we need to compute the homography matrix \(H\). We can relate a pair of corresponding points through the equation \(\textbf{p}' = H\textbf{p}\), where \(\textbf{p}\) is an \(\begin{bmatrix}x & y & 1\end{bmatrix}^T\) vector representing a point in the first image and \(\textbf{p}'\) is a \(\begin{bmatrix}wx' & wy' & w\end{bmatrix}^T\) representing a scaled point in the second image. \(H\), incidentally, is what we're solving for and exists as a 3 x 3 matrix with eight degrees of freedom.

$$

\begin{bmatrix}

wx' \\

wy' \\

w

\end{bmatrix}

=

\begin{bmatrix}

a & b & c \\

d & e & f \\

g & h & 1

\end{bmatrix}

\begin{bmatrix}

x \\

y \\

1

\end{bmatrix}

$$

In other words, we need to obtain values for \(a, b, ..., h\) given a set of \((x, y)\) and \((x', y')\) points. To do this, we flatten \(H\) into a vector \(\textbf{h}\) containing the eight unknowns and attempt to set up the matrix equation \(A\textbf{h} = \textbf{b}\). After setting \(b\) to be the vector of all the \((x', y')\) points, we are left to derive only the entries of \(A\). Accordingly, we focus on relating our givens – the \((x, y)\) and \((x', y')\) coordinates – using \(a, b, ..., h\).

It follows from the original homography equation that

$$

ax + by + c = wx' \\

dx + ey + f = wy' \\

gx + hy + 1 = w

$$

We can easily rearrange these equations into equalities for \(x'\) and \(y'\):

$$

\rightarrow \: ax + by + c = (gx + hy + 1)x' \\

ax + by + c - gxx' - hx'y = x' \\~\\

\rightarrow \: dx + ey + f = (gx + hy + 1)y' \\

dx + ey + f - gxy' - hyy' = y'

$$

At this point, we can rewrite our equalities as a matrix equation that's more or less in the \(A\textbf{h} = \textbf{b}\) form we're looking for.

$$

\begin{bmatrix}

x & y & 1 & 0 & 0 & 0 & -xx' & -x'y \\

0 & 0 & 0 & x & y & 1 & -xy' & -yy'

\end{bmatrix}

\begin{bmatrix}

a \\

b \\

c \\

d \\

e \\

f \\

g \\

h

\end{bmatrix}

=

\begin{bmatrix}

x' \\

y'

\end{bmatrix}

$$

Then, through inspection of the above relationship, we are able to reach our final system of \(2n\) equations. (\(n\) is the number of point correspondences between the two images). Since we have eight unknowns, we need \(n \geq 4\) in order to successfully solve for \(\textbf{h}\). Meanwhile, for stability's sake we will ideally have more than four correspondences (i.e. an overdetermined linear system of equations), so we solve for \(\textbf{h}\) using least-squares.

$$

\begin{bmatrix}

x_1 & y_1 & 1 & 0 & 0 & 0 & -x_1x_1' & -x_1'y_1 \\

0 & 0 & 0 & x_1 & y_1 & 1 & -x_1y_1' & -y_1y_1' \\

x_2 & y_2 & 1 & 0 & 0 & 0 & -x_2x_2' & -x_2'y_2 \\

0 & 0 & 0 & x_2 & y_2 & 1 & -x_2y_2' & -y_2y_2' \\

\vdots & \vdots & \vdots & \vdots & \vdots & \vdots & \vdots & \vdots \\

x_n & y_n & 1 & 0 & 0 & 0 & -x_nx_n' & -x_n'y_n \\

0 & 0 & 0 & x_n & y_n & 1 & -x_ny_n' & -y_ny_n'

\end{bmatrix}

\begin{bmatrix}

a \\

b \\

c \\

d \\

e \\

f \\

g \\

h

\end{bmatrix}

=

\begin{bmatrix}

x_1' \\

y_1' \\

x_2' \\

y_2' \\

\vdots \\

x_n' \\

y_n'

\end{bmatrix}

$$

Dimensionally speaking, we have (a \(2n\) x 8 matrix) \(\cdot\) (an 8 x 1 vector) = (a \(2n\) x 1 vector). After we've solved for \(\textbf{h}\), we can turn it into a 9 x 1 vector by appending a 1 (that being the scaling factor) and reshape it into a 3 x 3 matrix to serve as our final homography transformation. To reiterate, this matrix can be used to warp points in the first image into the second image's coordinate system.

[2] Applying Our Homography

Let's go into a little more detail on that front. We're warping an image, which means we're taking the colors that were already in the image and moving them to different places.

However, in our case we actually want to make use of an inverse warp. An inverse warp asks, for every pixel location in the output shape, "which color from the input image should go here?" The answer to that is usually a combination of input colors, so we bilinearly interpolate across these in order to make sure we don't miss anything.

Mathematically, of course, it's our homography that tells us the locations of the input colors to use. To derive the inverse warp, we begin again from our initial equation (but en masse): \(P' = HP\). This time, \(P\) is a 3 x \(n\) matrix containing all of the points from the input image (the one we're warping; the one whose colors we're moving around) in homogeneous coordinates. Likewise, \(P'\) is a 3 x \(n\) matrix containing scaled points from the image whose coordinate system we're warping to. \(H\), as before, is the homography matrix that converts \(P\)'s spatial domain into \(P'\)'s spatial domain.

$$

P' = HP \\

\begin{bmatrix}

w_1x_1' & w_2x_2' & \cdots & w_nx_n' \\

w_1y_1' & w_2y_2' & \cdots & w_ny_n' \\

w_1 & w_2 & \cdots & w_n

\end{bmatrix}

=

\begin{bmatrix}

a & b & c \\

d & e & f \\

g & h & 1

\end{bmatrix}

\begin{bmatrix}

x_1 & x_2 & \cdots & x_n \\

y_1 & y_2 & \cdots & y_n \\

1 & 1 & \cdots & 1

\end{bmatrix}

$$

We want the inverse warp, though, so we look for \(P\) in terms of \(P'\) – the input location corresponding to every output location. Once we have these, we can look up the colors around each of these input locations ("around" because the locations might be between pixels) and interpolate.

$$

P = H^{-1}P' \\

\begin{bmatrix}

x_1 & x_2 & \cdots & x_n \\

y_1 & y_2 & \cdots & y_n \\

1 & 1 & \cdots & 1

\end{bmatrix}

=

H^{-1}

\begin{bmatrix}

w_1x_1' & w_2x_2' & \cdots & w_nx_n' \\

w_1y_1' & w_2y_2' & \cdots & w_ny_n' \\

w_1 & w_2 & \cdots & w_n

\end{bmatrix}

$$

Equivalently (if, for example, a certain SciPy function requires that the image points be row vectors), we can use transpose properties to define

$$

\begin{bmatrix}

x_1 & y_1 & 1 \\

x_2 & y_2 & 1 \\

\vdots & \vdots & \vdots \\

x_n & y_n & 1

\end{bmatrix}

=

\begin{bmatrix}

w_1x_1' & w_1y_1' & w_1 \\

w_2x_2' & w_2y_2' & w_2 \\

\vdots & \vdots & \vdots \\

w_nx_n' & w_ny_n' & w_n

\end{bmatrix}

(H^{-1})^T

$$

Realistically, however, we won't know the \(w_i\) values ahead of time (for \(i \in [1 ... n]\)). What we'll actually compute is:

$$

\begin{bmatrix}

v_1x_1 & v_1y_1 & v_1 \\

v_2x_2 & v_2y_2 & v_2 \\

\vdots & \vdots & \vdots \\

v_nx_n & v_ny_n & v_n

\end{bmatrix}

=

\begin{bmatrix}

x_1' & y_1' & 1 \\

x_2' & y_2' & 1 \\

\vdots & \vdots & \vdots \\

x_n' & y_n' & 1

\end{bmatrix}

(H^{-1})^T

$$

This isn't a problem, since we can simply renormalize each of the row vectors until the third coordinate is always 1. Anyway (as mentioned like three times), we can use the input image locations to complete our inverse warp via color interpolation! At this juncture, we're fully able to warp an image based on a pointwise projective transformation.

[3] Rectifying Images

Enough math. Let's take what we've done so far and produce something visual! As a test of projective warp correctness, we attempt to rectify a couple of images. Specifically, we'll try to set up our warps so that planar surfaces are frontal-parallel in the output image.

In the examples below, we select four input points over the corners of some planar surface, and then use the rectified version of those points as the corresponding \(P'\) coordinates.

Sunset view from my apartment (11/11/16)

|

Rectified using sq. window as a basis

|

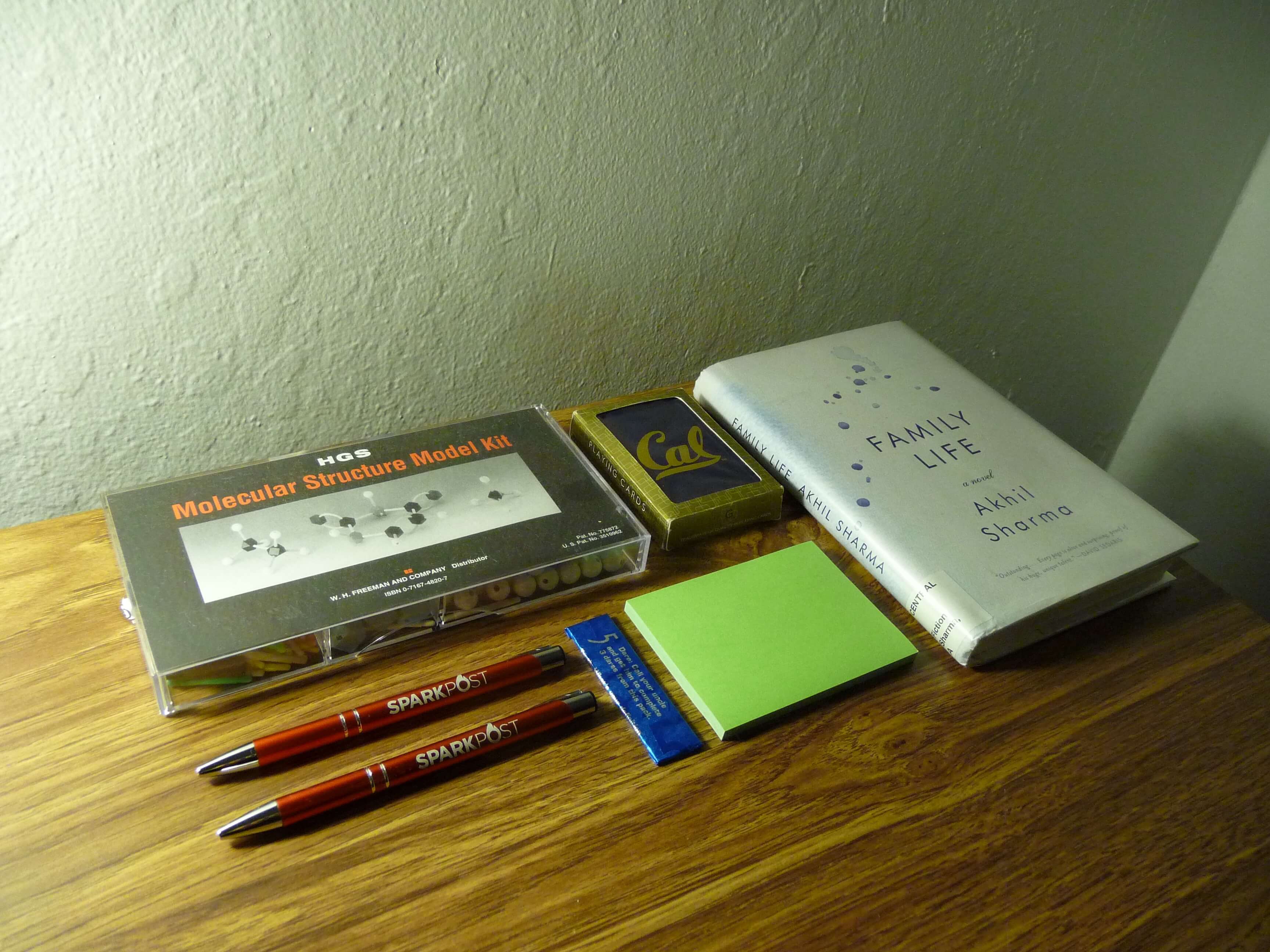

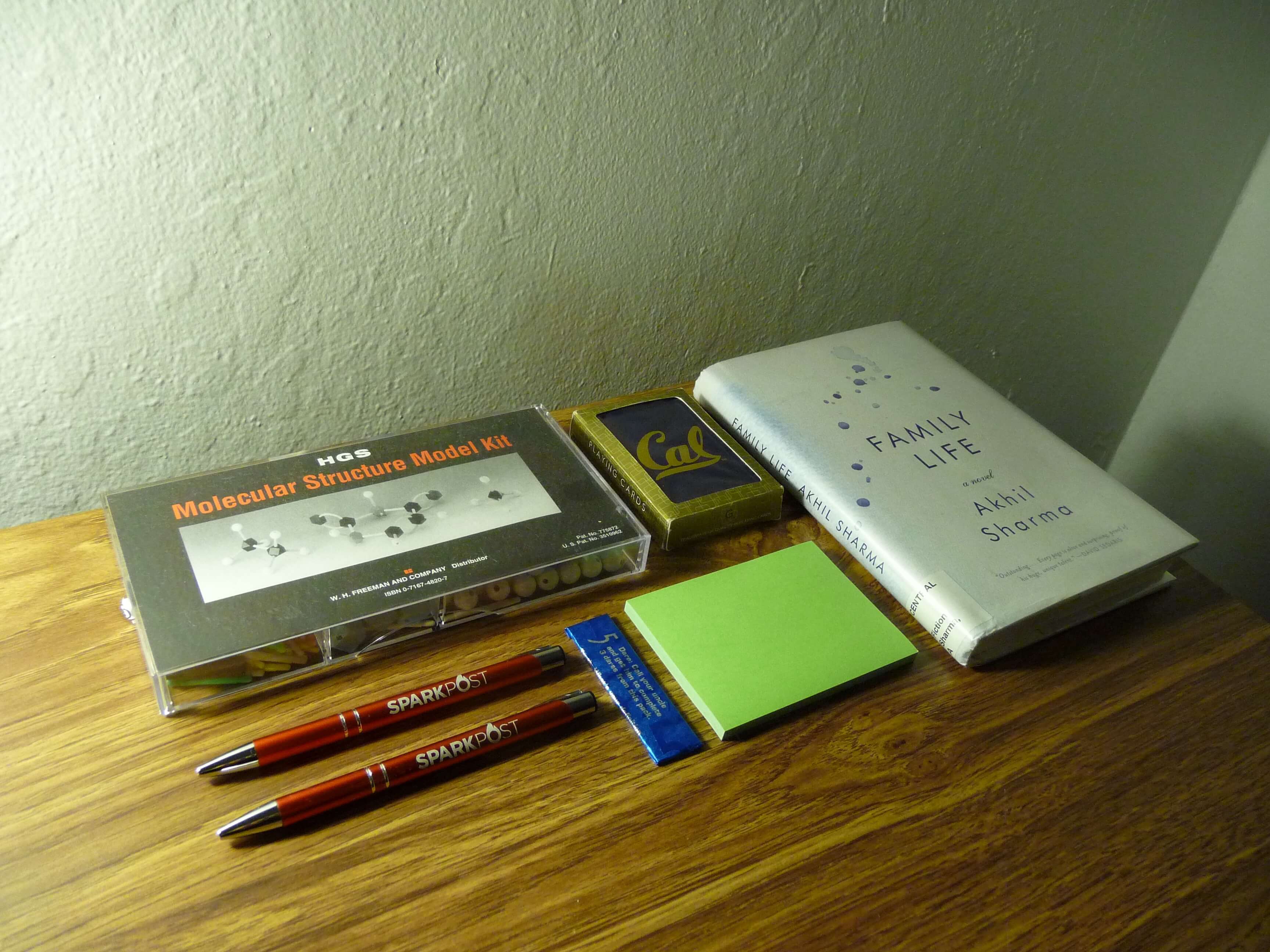

Assortment of rectangular objects

|

Rectified over Family Life

|

[4] Producing Panoramic Mosaics

Here, we warp and blend a sequence of overlapping images together – creating, in expectation, a nicely stitched panorama. Given a set of photographs that don't span more than 180 degrees (faraway scenes work well, since we can then project everything onto a plane at infinity), we start by selecting a centrally positioned photograph to serve as the base panorama image. We then project and blend all other images one-by-one into this photograph's coordinate system, amassing a holistic panorama as we go.

That's pretty much it!

Since individual images are projected directly onto their correct coordinates in the overall panorama, we don't need to align anything after a warp. All we need to do is blend, which we handle through weighted averaging at every pixel. To facilitate this weighting, we maintain alpha values for each image's pixels that represent how far every pixel is from the unwarped image's center column, i.e. how important the pixel is to its source. We assign the alpha value for the center column to 1, and let this value fall off linearly as it approaches the sides (such that it is 0 at the edges).

So... after warping, we compute the output pixels specific to the individual image, the output pixels specific to the growing panorama image, and the output pixels at which there is overlap. For the non-overlap pixels, we use the unmodified RGB values from their source images. For the overlap pixels, we normalize the alpha values so that they sum to 1 and then linearly interpolate between the color values of each image (using the normalized alphas as weights). Finally, we set the entire alpha channel to 1 in the output panorama at each stage in order to get rid of any residual fading effects.

Yeah... below we have some results. The photographs are from me biking up to Indian Rock and taking a bunch of pictures of the distance; they yielded some decent mosaics in my opinion (especially after cropping!).

Cropped view from a rock, pt. 1

|

View from a rock, pt. 1

|

Left image

|

Center image

|

Right image

|

The second panorama also turned out all right. However, success was tempered by the fact that I – being an unprofessional panorama photographer – managed to capture a bunch of pesky and ephemerally present humans across the bottom of my photographs. Even after being blended into the final image, their ghosts can still be observed.

Cropped view from a rock, pt. 2

|

View from a rock, pt. 2

|

Left image

|

Center image

|

Right image

|

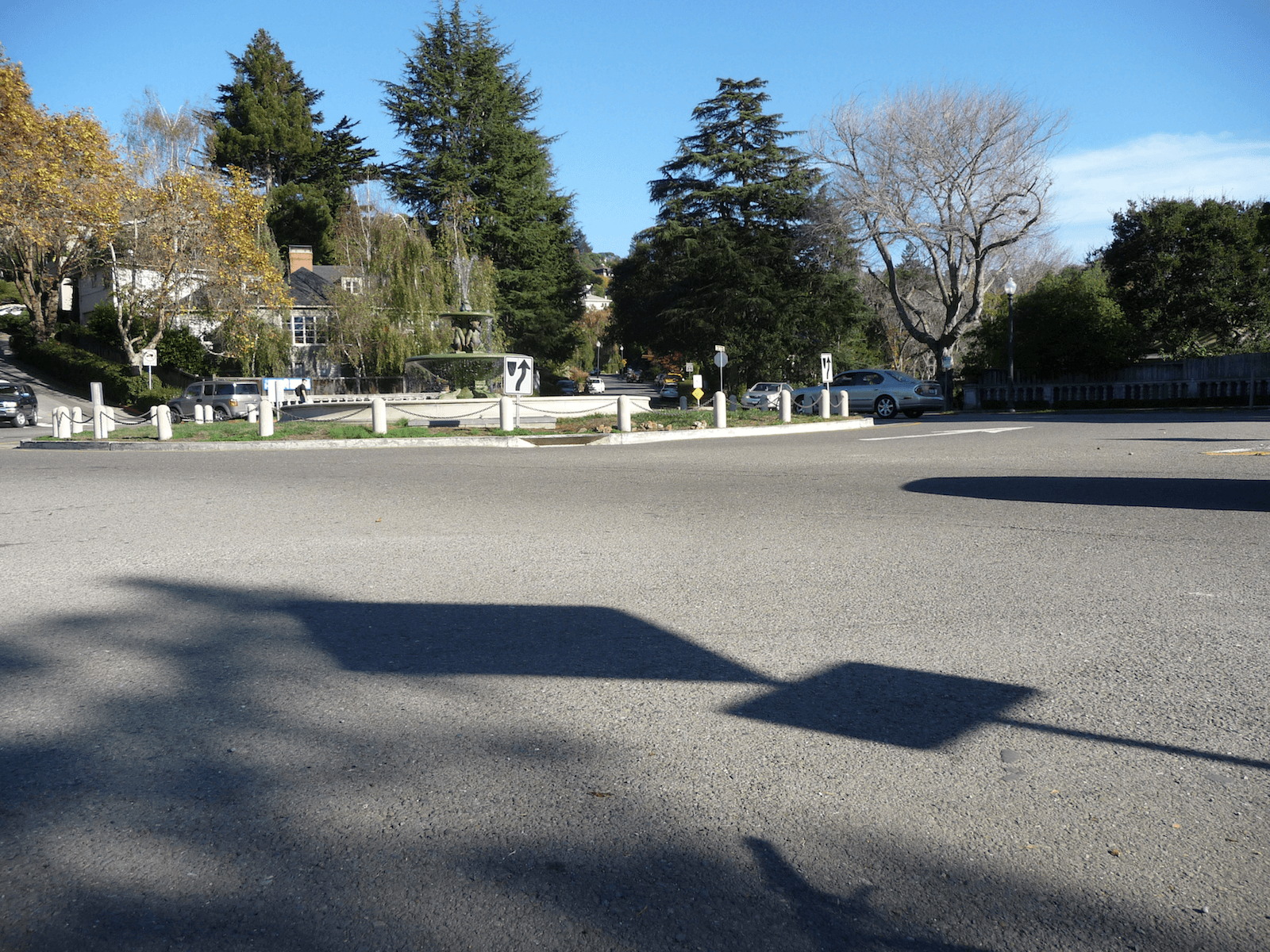

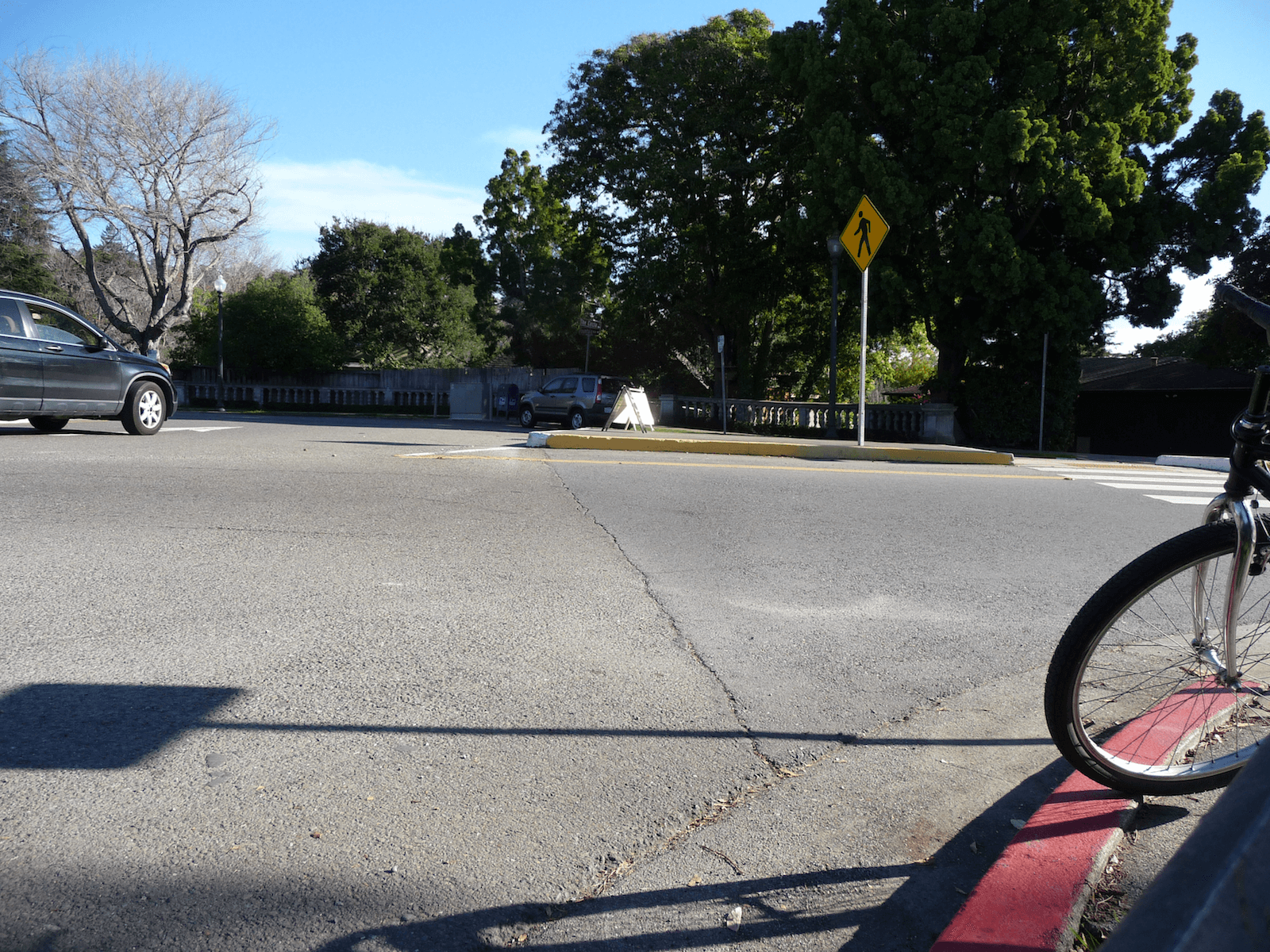

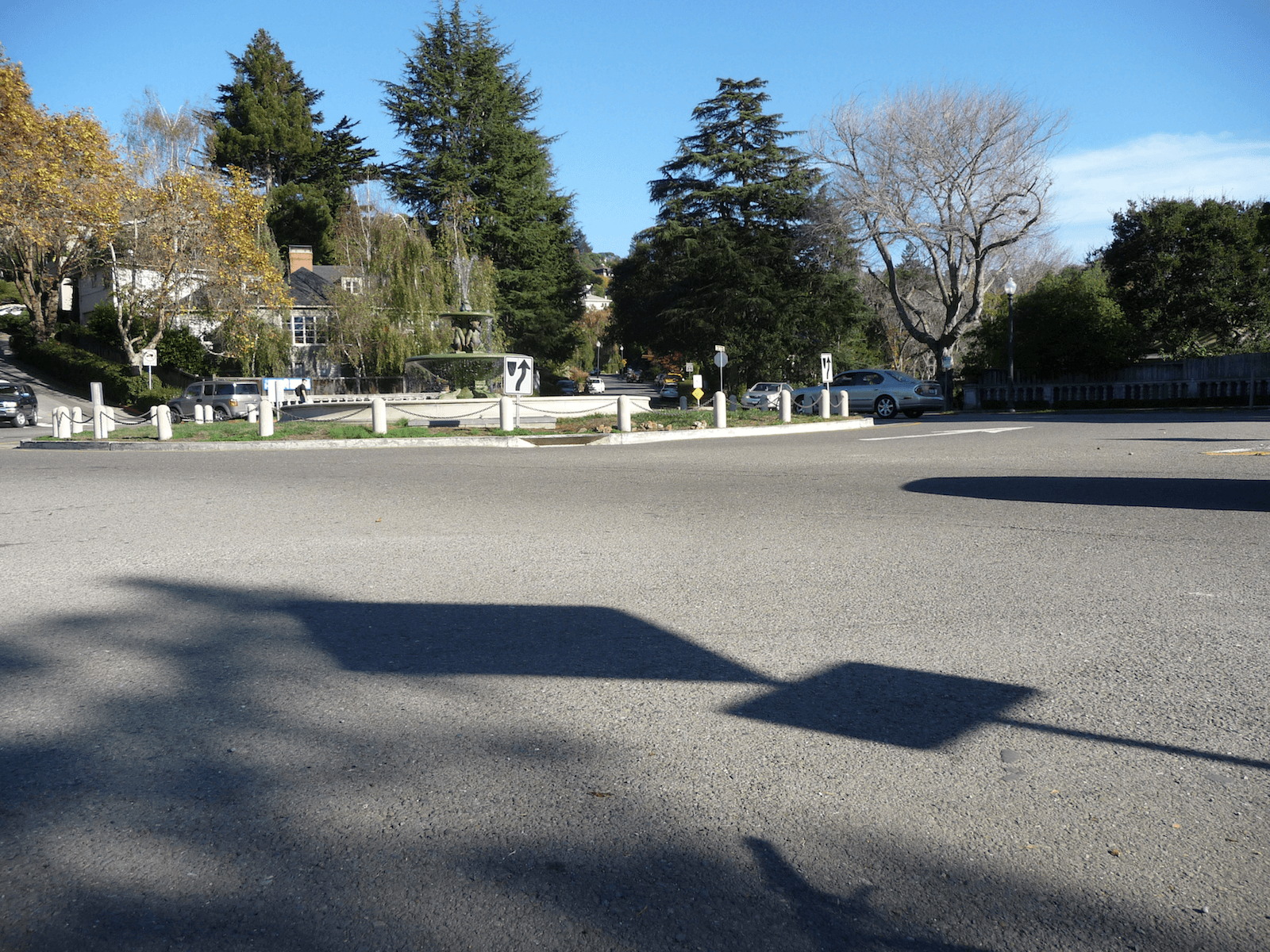

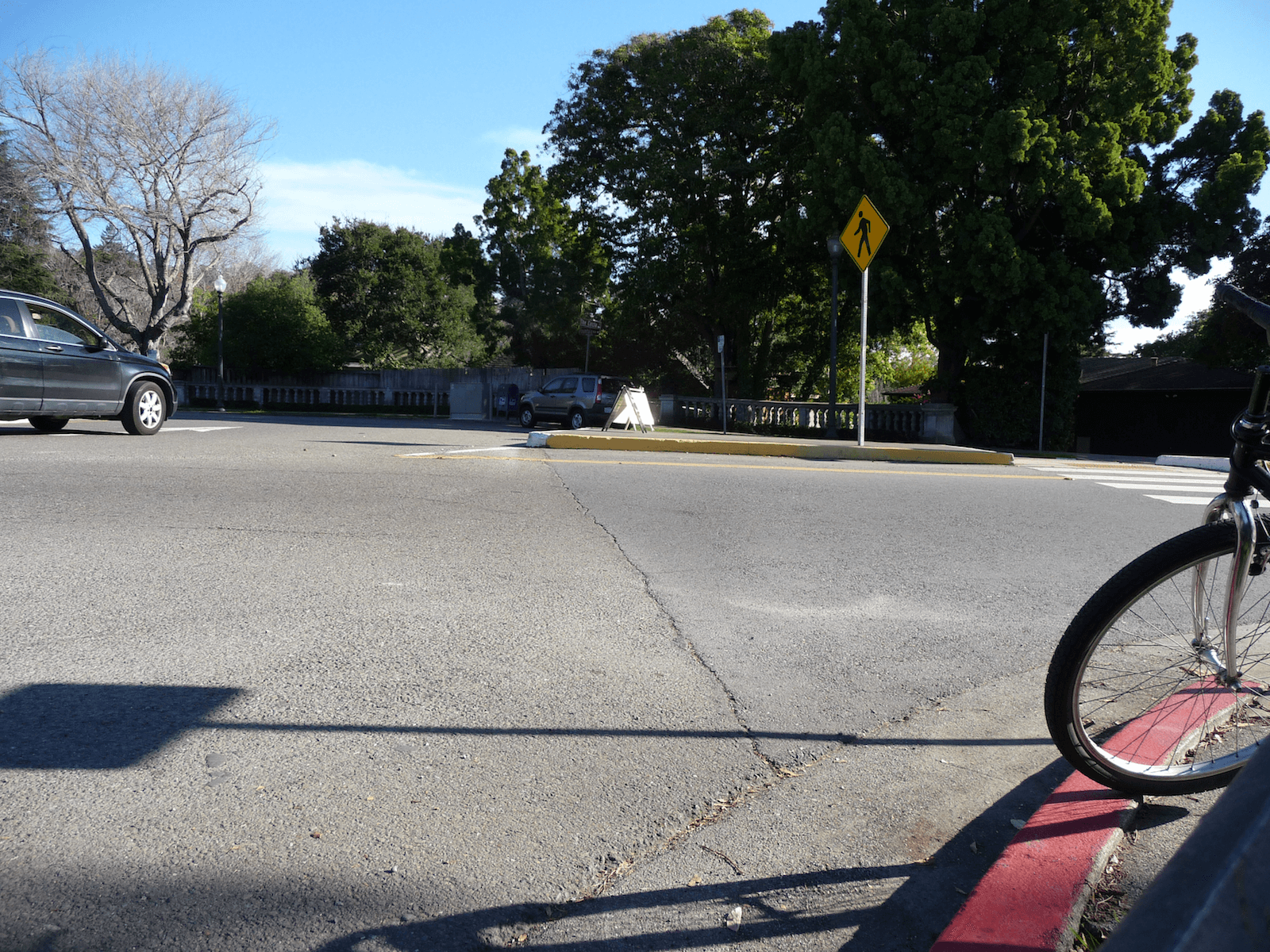

Technically speaking, my third panorama was the least successful because it contained a lot of moving elements (in the form of automobiles) and also spanned more than 180 degrees of view. You can see from the top right corner that the third image's projection is beginning to warp out of control! You might also notice a car without its shadow, and a shadow without its car.

I think I also messed up somewhat on keeping my center of projection constant.

"The Circle", cropped

|

It's up north somewhere

|

Left image

|

Center image

|

Right image

|

[5] Looking Back

First of all, I learned that planar mappings don't really work for > 180° mosaics, as I spent an embarrassing amount of time trying to warp and merge two images that spanned an excessive view space.

However, I'm glad I got to experience the power of homographies / projective transformations. It was very exciting to be able to modify the perspective of my images in a specific way, and to be able to stitch multiple photographs into one! I never realized that it was possible to achieve these effects with just a 3 x 3 matrix and some blending.

Anyhow, I leave you with a rectified image of my lunch.

Thanks for reading! :)

Lunchtime carnage

|

Rectified carnage

|